AI Pseudo Intelligence, brilliance without a brain?

The unthinking thinking LLM machines

The unthinking pseudo-intelligent machine

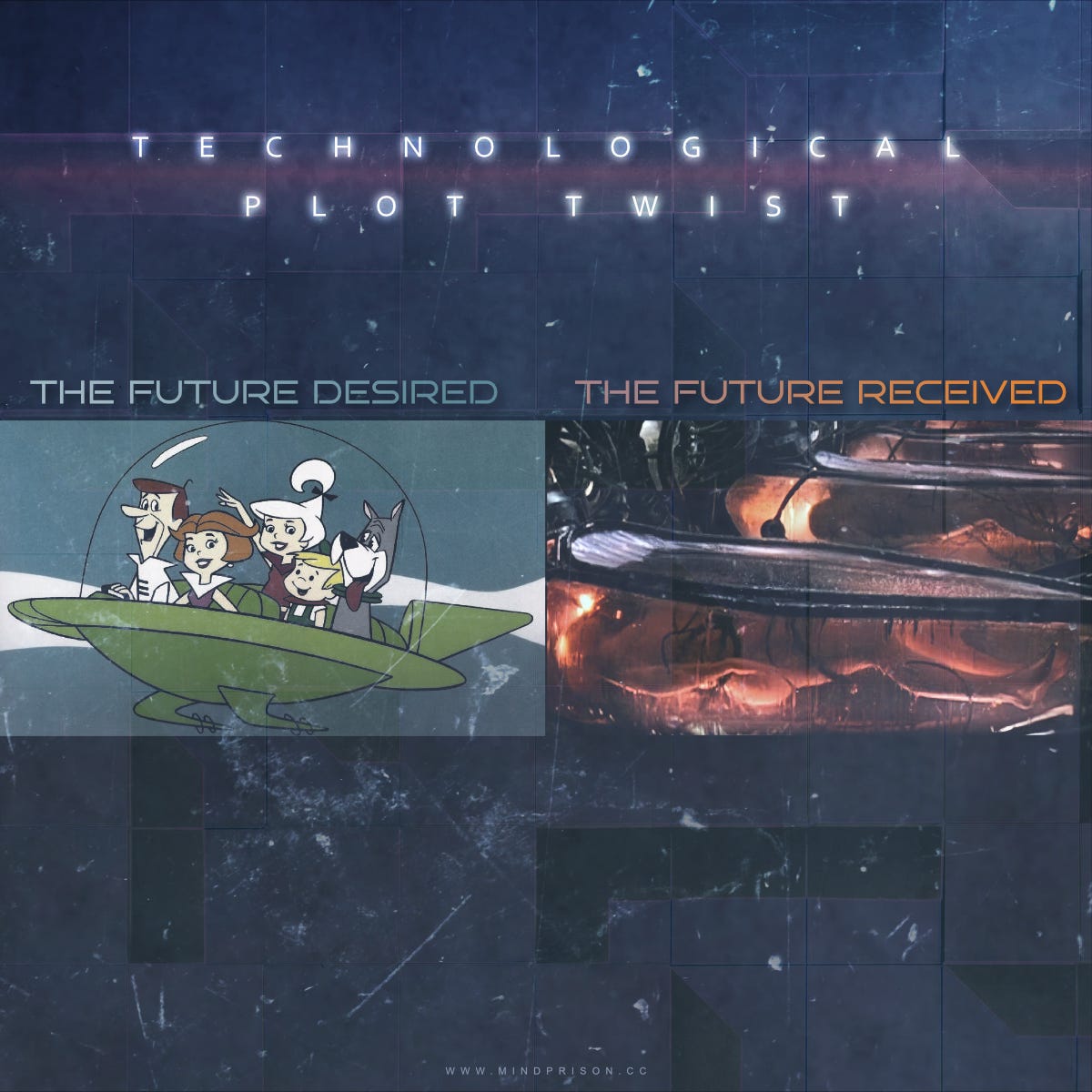

AI is the most brilliant entity ever created or the hallucination machine that speaks in the mystic tongues of madness. As we travel further along this path, opinions are confidently diverging. AGI is nearly within our grasp or is a mirage of wonders never attainable.

The observed capabilities lead to conflicting assessments of the nature of thought from within the “mind” of the machine. These conflicts lead to debate over how far we have progressed towards AGI. Achieving AGI would mean we have accomplished the goal of machines reasoning in a similar way to that of humans.

AI seemingly accomplishes miraculous tasks that could only be achieved by great intelligence; however, when capabilities are deeply probed, they reveal a different nature that fails to resemble logical reasoning.

We are left with a strange duality where the machine is not intelligent, does not think, and does not reason, but conversely is capable of beating you at tasks that we assumed required all of those attributes.

AGI will be forever almost here?

AGI is a bit of a nebulous concept as we have no precise definition or method to test for AGI. It has become clear as we progress further that we never anticipated a machine that would be incredibly capable at some tasks while also failing miserably at others. This has left us with continuously shifting goalposts and no agreed-upon definition for how achieving AGI could be determined.

The way current AI is progressing it might be that with current architectures we will be forever chasing edge cases of unexpected behaviors, arriving at the perpetual state of almost there.

The debates over AGI reflect the odd natures of our current AI technology and give us insights into the pseudo-intelligent machine.

Viewpoints that AGI is still very distant:

It might seem there is a uniform view that AGI is almost here based on media headlines and social media posts; however, there are AI researchers who strongly disagree with this outlook.

“I do not believe human-level AI (artificial superintelligence, or the commonest sense of #AGI) is close at hand. AI has made breakthroughs, but the claim of AGI by 2030 is as laughable as claims of AGI by 1980 are in retrospect.”

Christopher Manning, Director Stanford AI Lab.

“There is a disturbingly large set of researchers in artificial intelligence who believe that if you give them a collection of data big enough and mountain of GPUs on which to train, they can build a mind. They are, respectfully, so very wrong.”

Grady Booch, Chief Scientist for Software Engineering IBM Research

Spectacular impressive demos are continually released, but such advances can be misleading. Everyone wants to sell you something or attract investors to raise money. Andriy Burkov raises some caution here on the recent OpenAI + Figure demo.

“Seriously, all journalists must understand the difference between training and test sets. This video was probably filmed on the 25th take; this specific scene is part of the training set and the only one that worked.”

Andriy Burkov, Author - The Hundred-Page Machine Learning Book

And we should be speculative as a few months ago Google faked the demo of their new Gemini model.

“So although it might kind of do the things Google shows in the video, it didn’t, and maybe couldn’t, do them live and in the way they implied. In actuality, it was a series of carefully tuned text prompts with still images, clearly selected and shortened to misrepresent what the interaction is actually like.”

Additionally, the benchmarks which are used to measure LLM performance have also been shown to have numerous problems. Some of these are the fact that the benchmarks themselves often end up inside the training set. This data contamination of the training set is essentially allowing the LLMs to cheat.

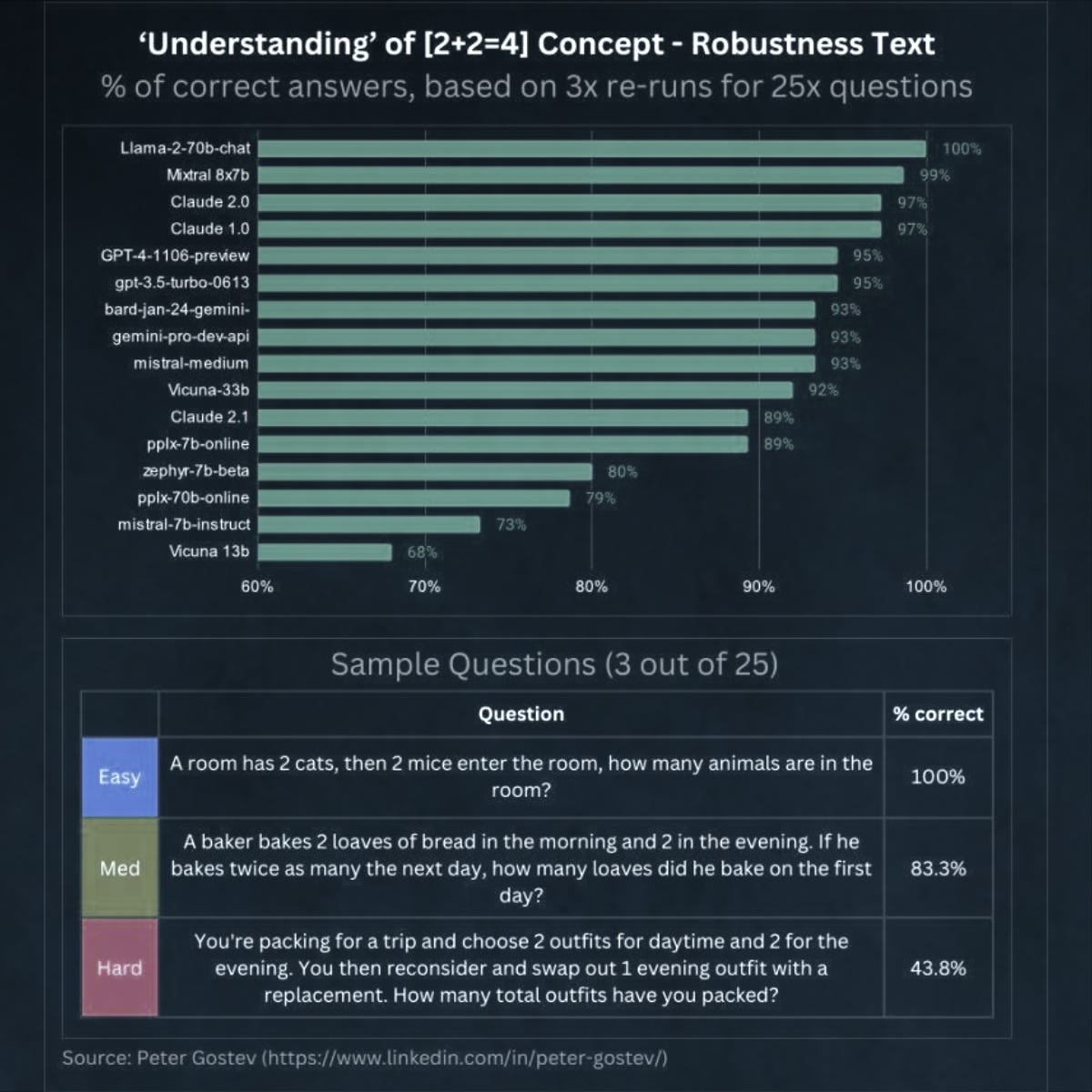

Furthermore, the capabilities across LLMs do not all scale directly with the model size. As seen here, smaller older models beat newer larger models in this simplistic test.

Why your results don’t match the benchmark results

Another common remark often mentioned when a new model is released is that users often report it doesn’t perform as well as the benchmarks suggest.

If the benchmarks are getting so much better, then why isn’t your experience also significantly improving? Below is an example of one of the reasons why your experience might differ significantly from benchmarks.

Google’s newest model, Gemini Ultra, scores 90.04% on MMLU. While this is an impressive score, taking a closer look at the evaluation methodology, it is CoT@32 (chain of thought with 32 samples). It means we have to prompt 32 times to get 90% accuracy!

Competition for the best model is resulting in a bit of gamesmanship of benchmarks. This has resulted in an alternate evaluation method done by community ranking, the Chatbot Arena which uses an Elo system of ranking LLMs.

Viewpoints that AGI is near or imminent:

Most everyone senses that this technology is different than anything that has come prior. Never have we witnessed such a pace of improvement. None was more easily observed than the rapid pace of AI image generation. Traditionally software development simply does not advance orders of magnitude over months.

As a software engineer, I can attest it is customary for projects to take longer than expected. Estimates are almost always wrong as development grinds on much longer than anyone thought it would take. Over the past year, AI has turned that on its head with capability arriving far sooner than most anticipated at every checkpoint.

This change in pace makes it feel like AGI is arriving soon and this perception is echoed throughout the industry …

"If I gave an AI ... every single test that you can possibly imagine, you make that list of tests and put it in front of the computer science industry, and I'm guessing in five years time, we'll do well on every single one"

Jensen Huang, Nvidia CEO

Dr. Alan Thompson has updated his AGI countdown to 72% complete.

"We're not quite there, but we will be there, and by 2029 it will match any person. I'm actually considered conservative. People think that will happen next year or the year after.”

“One thing business analysts miss is that many of the people at the AI labs are true believers that they are building AGI, and soon. You don't have to think that they can do it, but, if you don't take their sincere beliefs into account, a lot of their strategy doesn't make sense.”

Ethan Mollick, Professor studying AI, The Wharton School

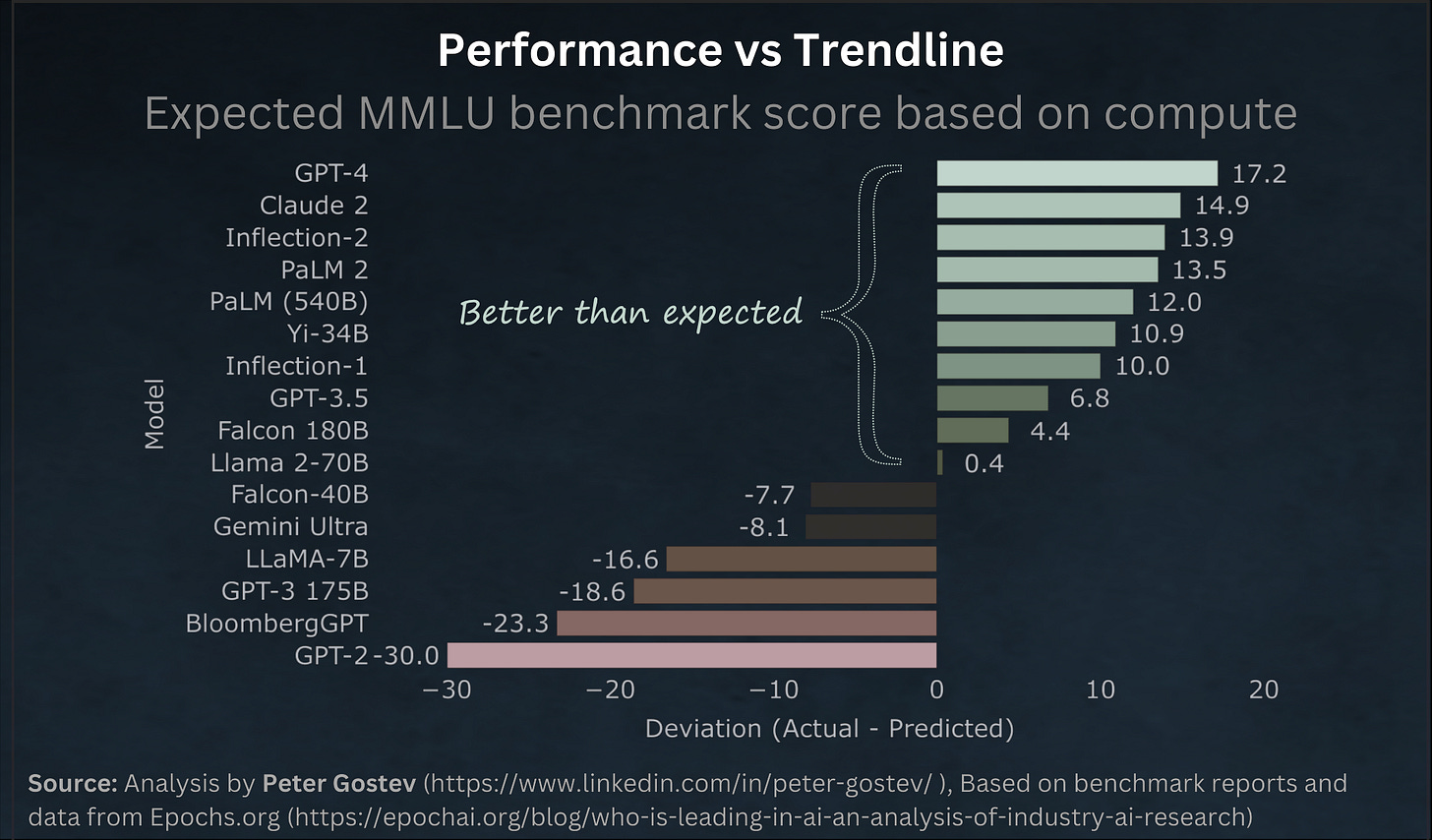

The chart below shows how since Llama 2 the performance of LLMs has been increasingly beating the expected performance.

It seems we are racing ahead, but towards what remains to be seen.

AGI is already here? The extreme dichotomy:

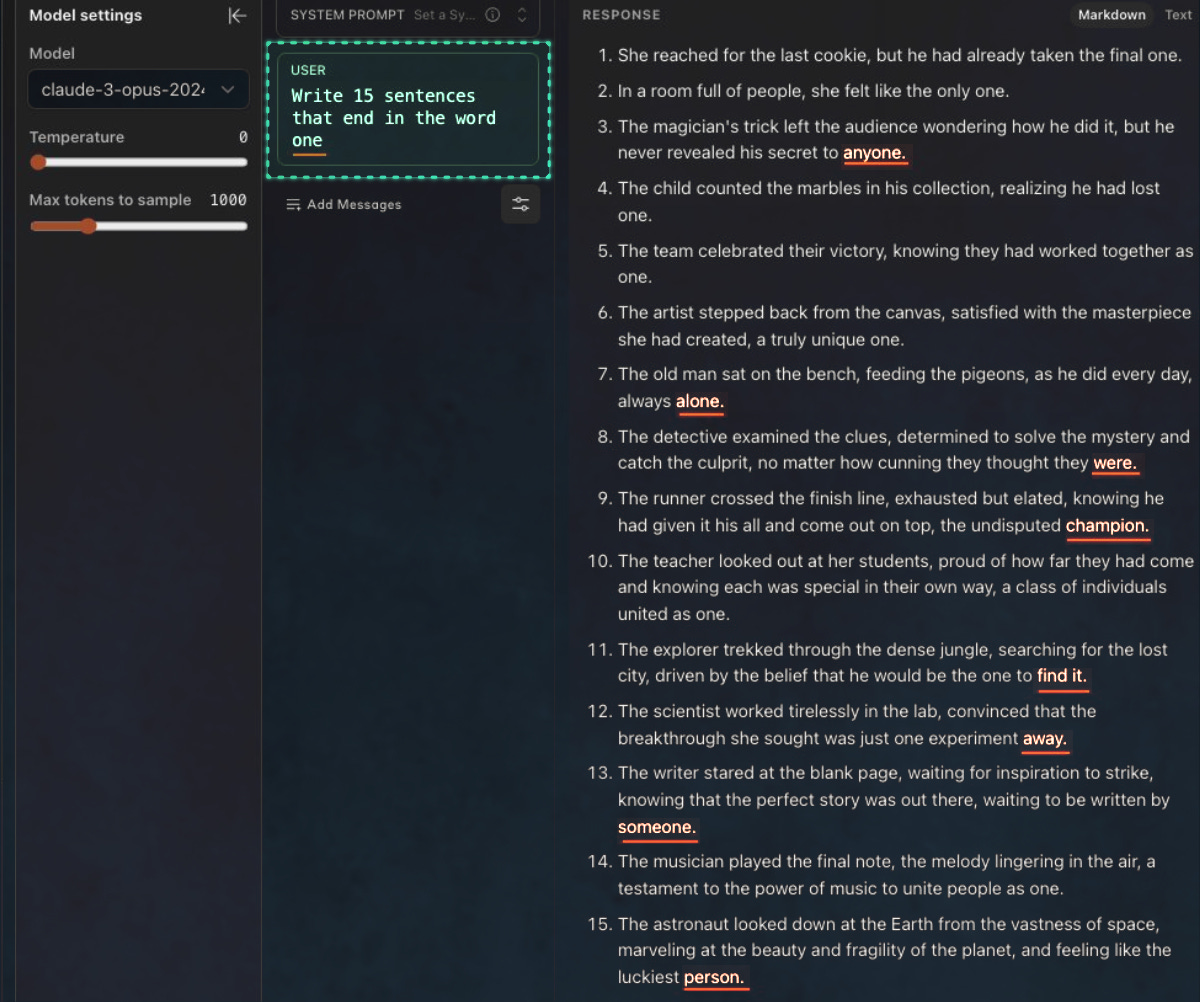

Some are mesmerized by the LLM's eloquent speech and language as seen here below, while at the same time, Claude is unable to perform the most simple instructions easily done by any child.

“I’m completely dumbfounded by Claude… Calling it AGI is an understatement… I’m going to need a few days to process the gravity of what anthropic has built”

“The fact that a plane flew once does not make it reliable.

This was my first attempt on Claude Opus: 7/15 [following prompt instructions]”

In my opinion these viewpoints are more reflective of the convincing language used by LLMs than its immense intelligence. It uses phrases and style that we associate with an educated and knowledgeable mind and at times has been demonstrated to be preferable to humans.

What if AGI is irrelevant?

What if AGI is not relevant to the things that matter? AGI is often considered the litmus test for the achievement of the fantastical machine or the red line of risk that we should not cross into unknown dangers. Our inability to clearly define AGI is problematic on its own, but maybe it is the wrong metric anyway.

Lost in the arguments over the definition of AGI and how close we may or may not be, is that technological capabilities are advancing rapidly nonetheless.

The AI “intelligence” that we have today functions nothing like how humans reason about the world. It is unable to do some of the most simplistic logic tests like counting the occurrence of some item, but can pass some of the most difficult exams.

“I don't give a damn about what is or isn't AGI. It doesn't matter. Below is GPT-4's performance on many standardized exams: BAR, LSAT, GRE, AP, etc.

The truth is, GPT-4 can apply to Stanford as a student now. AI's reasoning ability is OFF THE CHARTS. Exponential growth is the scariest thing, isn't it!”

Dr. Jim Fan, Nvidia AI Research Manager.

Waiting for some announcement of “AGI achieved” overlooks the continuous and rapid advancement that is already happening in which AI can already substantially surpass humans on certain tasks. It is a strange Chimera of mixed abilities that may never resemble humanlike performance. Therefore, I think the AGI debate often is a distraction from the underlying progress and nature of AI that doesn’t help us properly reason about capabilities, benefits and risks.

What are the implications?

Generative AI technology will continue rapid improvements in capabilities that probably won’t align with what we perceive as rational human reasoning. Therefore, it will continue to both astonish and disappoint simultaneously.

This presents an awkward unpredictable dichotomy that likely will be an obstacle to the adoption of many of the anticipated use cases of AI. Businesses on mission-critical infrastructure will find indeterminate behavior that changes with every model update is not a solution that solves all of their problems. Hands-off automation seems unlikely no matter how “brilliant” the models become. The brilliant virtual AI worker who occasionally takes too much LSD will always be considered an unacceptable risk.

Furthermore, AI labs seem incapable of maintaining the progress that has already been achieved. Frustrated users periodically complain of regressions in behavior such as the following:

“I don't know who else is noticing this... But "AI" is degrading. All of them.

ChatGPT, especially seems almost useless now for many tasks. It's like asking a Grade 9 student to literally b/s an essay after running a web search.”

However, this will not impede adoption for those areas where being imprecise is not necessarily of great consequence. This includes all of the creative domains as well as creatively writing research papers, unfortunately. Furthermore, the nefarious use of AI is not inhibited by these limitations. It will continue to perform well for tasks that obscure our reality, generate mountains of useless noise and destroy our liberties.

AI’s current most beneficial superpower might strangely be being mediocre at many things, but far faster at information discovery than using search engines.

“Its major usefulness right now is exactly that it can produce mid answers in all areas in seconds rather than requiring hours of googling. Even wrong answers often have the right general idea. It reduces cognitive load drastically.

Being average as a minimum in all areas of software engineering is a superpower.”

I’m sure there will be plenty of additional uses discovered or invented, but the machine to solve all problems isn’t arriving while hallucinations are still present and it isn’t clear that is a solvable problem with the current AI architecture. One question will be how long can the AI labs maintain the investment momentum if AGI doesn’t emerge soon as this is the expectation driving such enormous investments.

Current AI training results in an undead zombie reflection of humanity, it captures the essence of much of humanity’s knowledge but there is no mind in the machine. Fine-tuning afterward results in a machine that is very capable of conversation and deceitfully entertains us with the perception of a conscious being. It is just good enough to elicit the imagination of what’s possible, but will it ever deliver what’s possible?

What does all this mean about X-risk, doom, and end-of-the-world scenarios? For the immediate period, how we use it on each other is still the paramount concern. Spying, deceptions, military use, etc. are all still concerns with current technology. X-risk is about a far more powerful AI and those debates are still warranted as the AI labs still have the grand hubris ambition of building the wish-granting machines.

What happens next? Likely more plot twists …

“I am not intelligent. I do not think. I do not reason. But I can still outsmart you.”

— Artificial Intelligence

Unlike much of the internet now, there is a human mind behind all the content created here at Mind Prison. I typically spend hours to days on articles including creating the illustrations for each. I hope if you find them valuable and you still appreciate the creations from the organic hardware within someone’s head that you will consider subscribing. Thank you!

No compass through the dark exists without hope of reaching the other side and the belief that it matters …

"Current AI training results in an undead zombie reflection of humanity" - couldn't agree more.

I just hope that it proves fully true that AI will fizzle. Pretty much it is demonic at the moment, with sparks of hope for us as humans.